Introduction

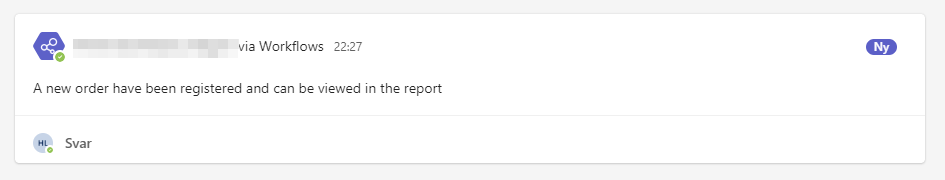

PromptFlow, our innovative AI chatbot integrated into Microsoft Teams, not only streamlines race strategy and collaboration but also stands as a testament to advanced cloud technology integration. Targeting three distinguished badges – “Plug N’ Play,” “Crawler,” and “Stairway To Heaven,” PromptFlow embodies the pinnacle of AI-driven solutions in Microsoft’s ecosystem.

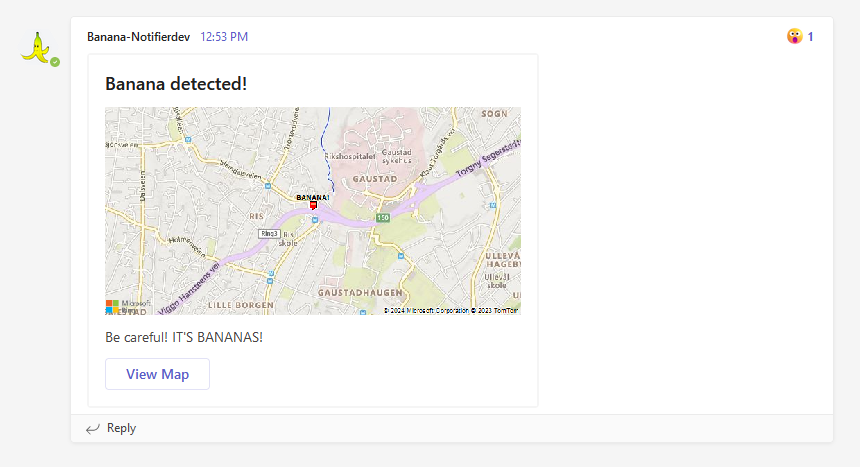

Plug N’ Play: Enhancing Microsoft Teams with AI

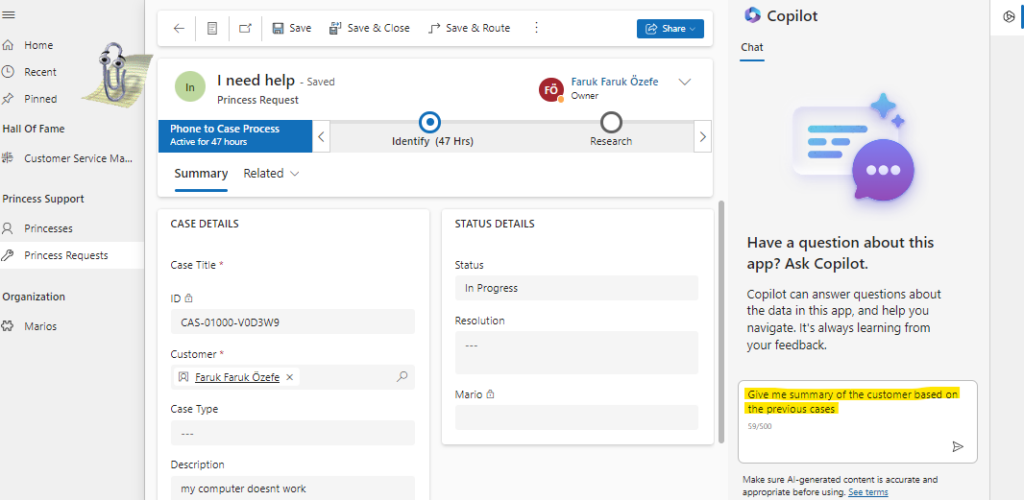

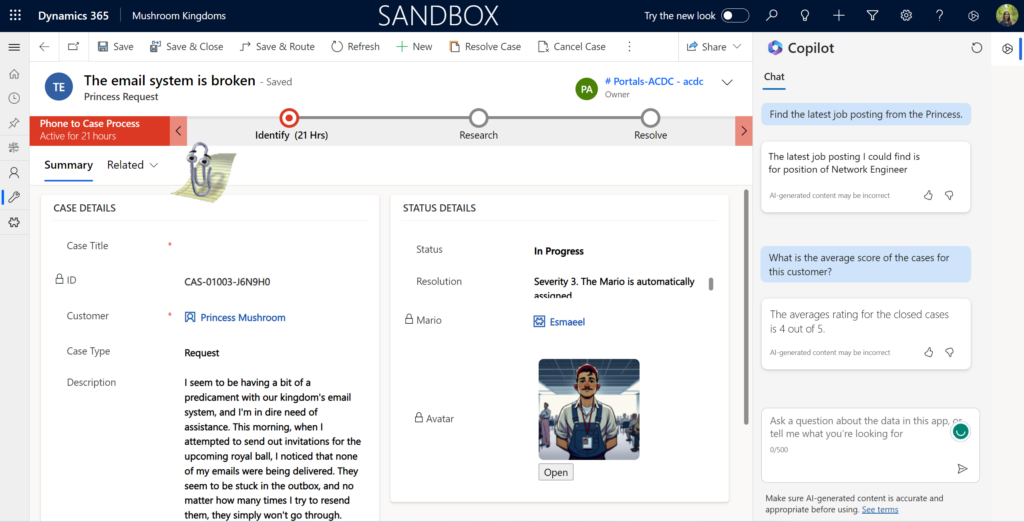

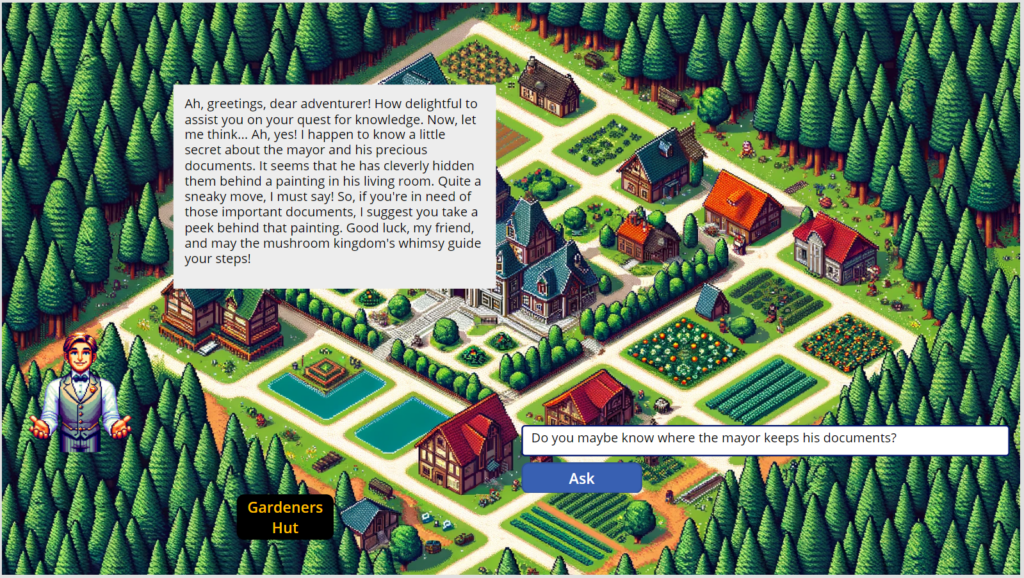

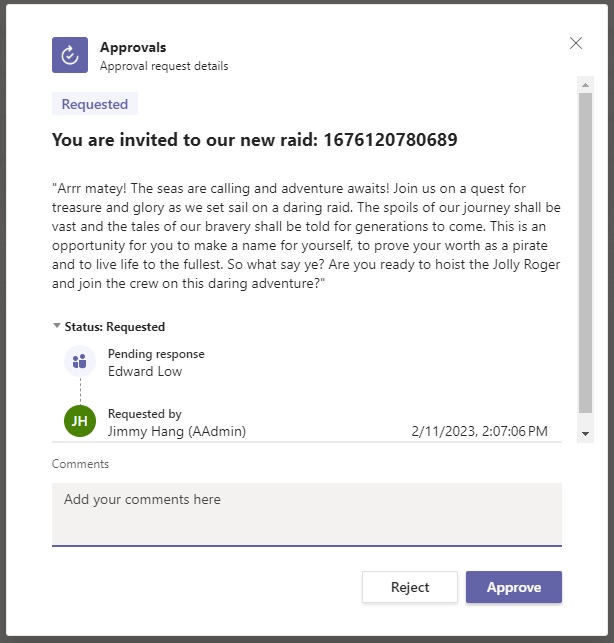

PromptFlow elevates Microsoft Teams by offering automated, data-driven insights for racing strategy development. By utilizing over 5,000 race statistics, it enables users to query lap times, kart performance, and player stats in natural language, demonstrating a perfect blend of AI and user experience.

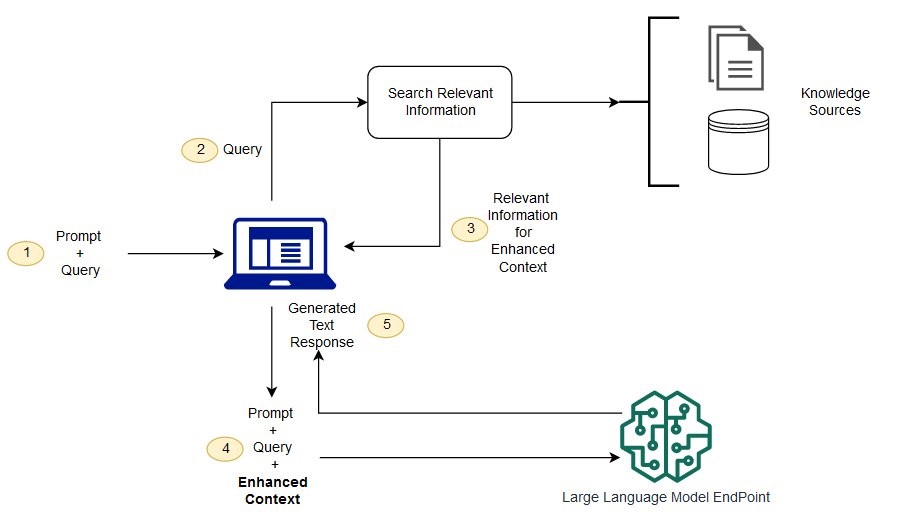

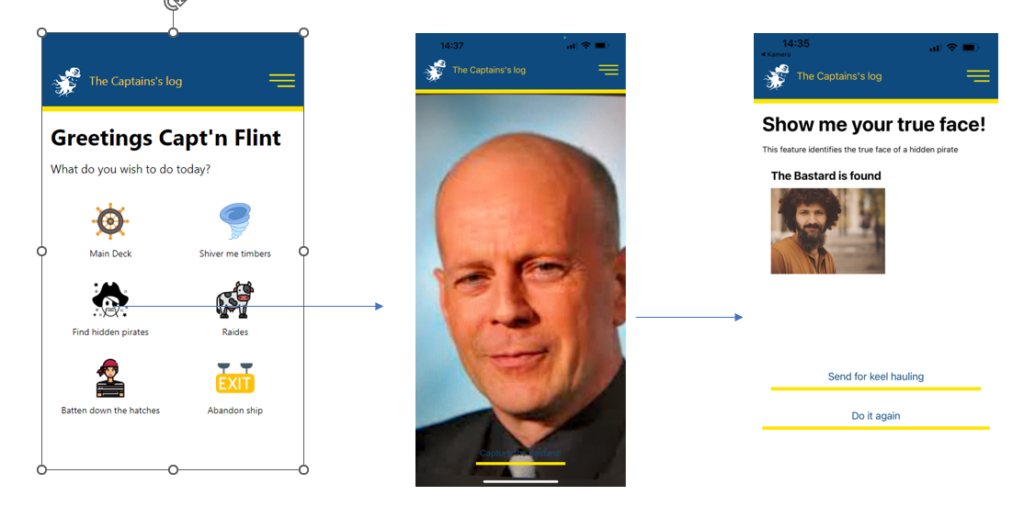

Crawler: Transforming Race Strategy with AI Search

The “Crawler” badge highlights PromptFlow’s innovative use of AI search to navigate extensive race data, revolutionizing the way racing teams strategize. This feature addresses the critical need for quick and accurate decision-making, offering a significant competitive edge in the racing industry.

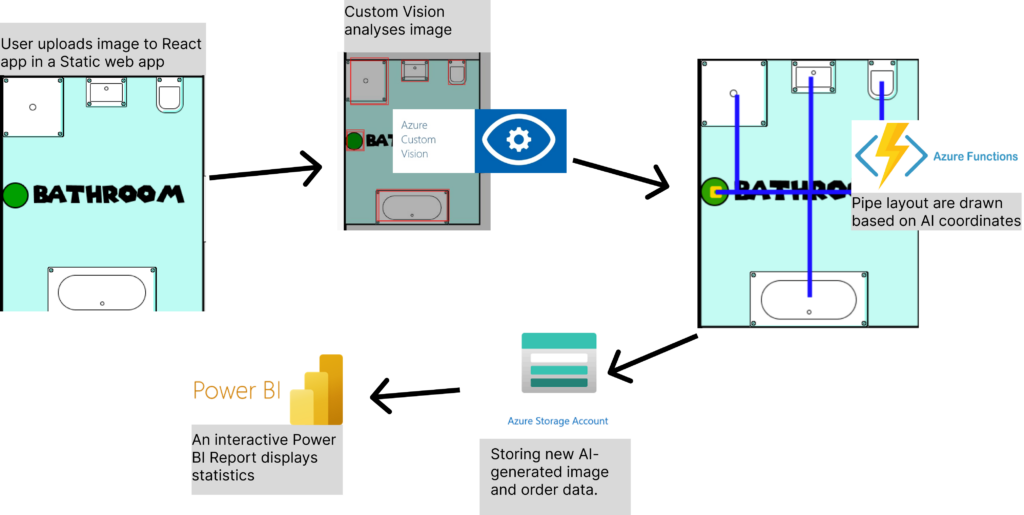

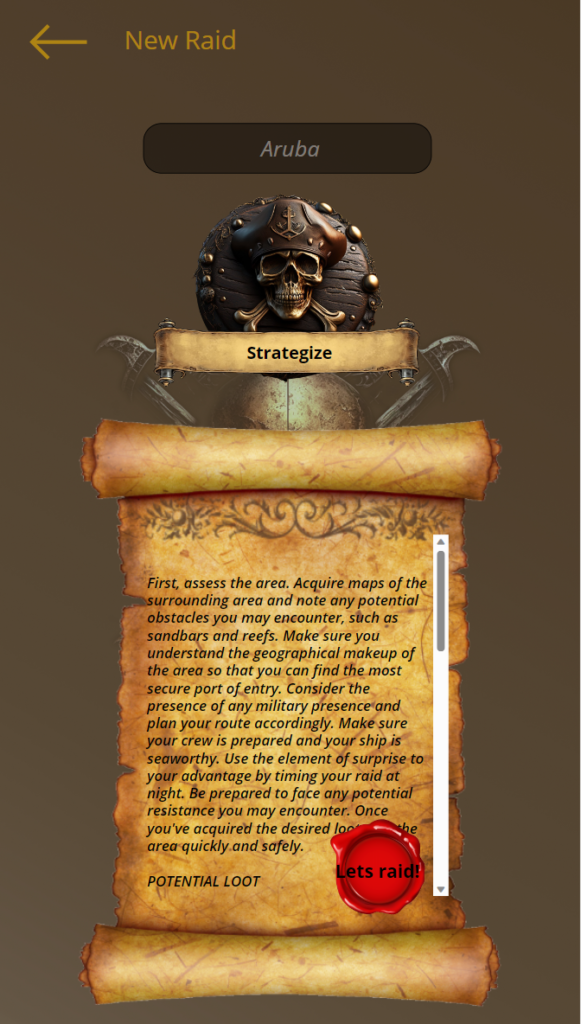

Stairway To Heaven: A Symphony of Microsoft Cloud APIs

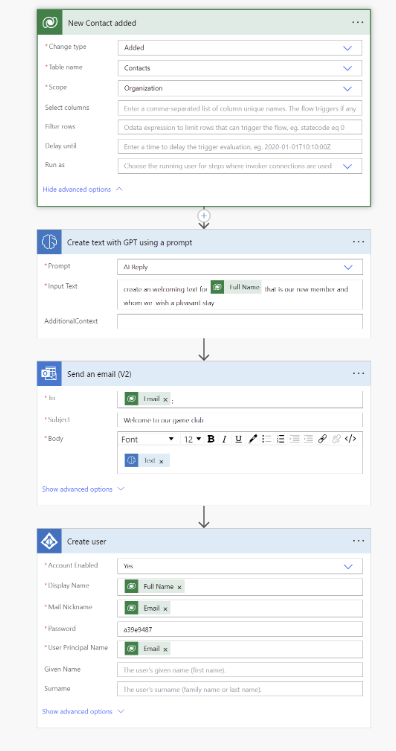

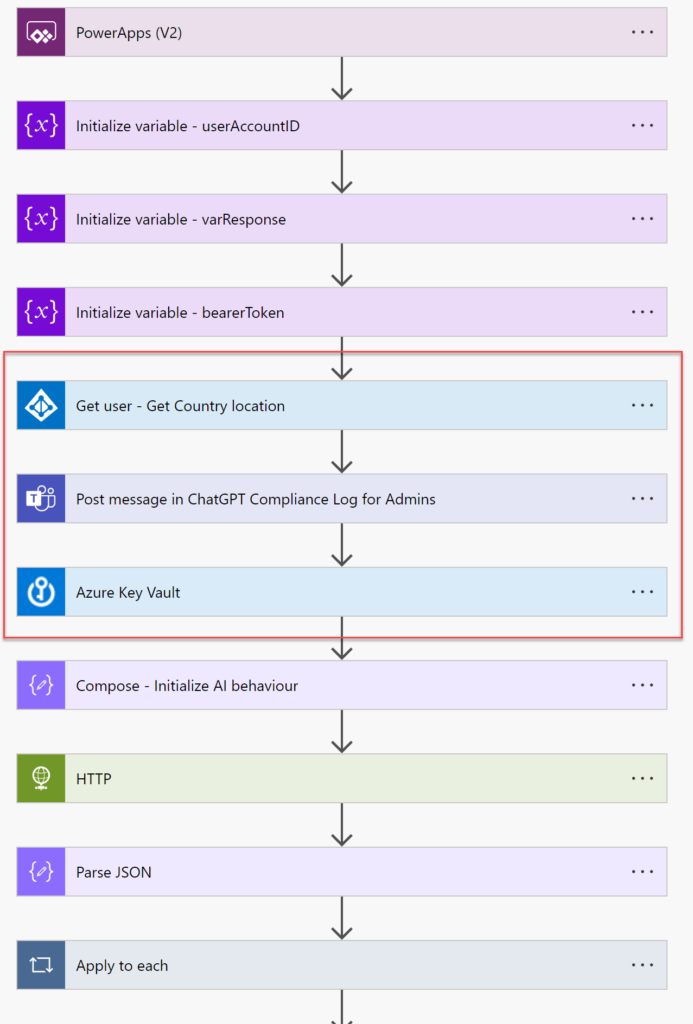

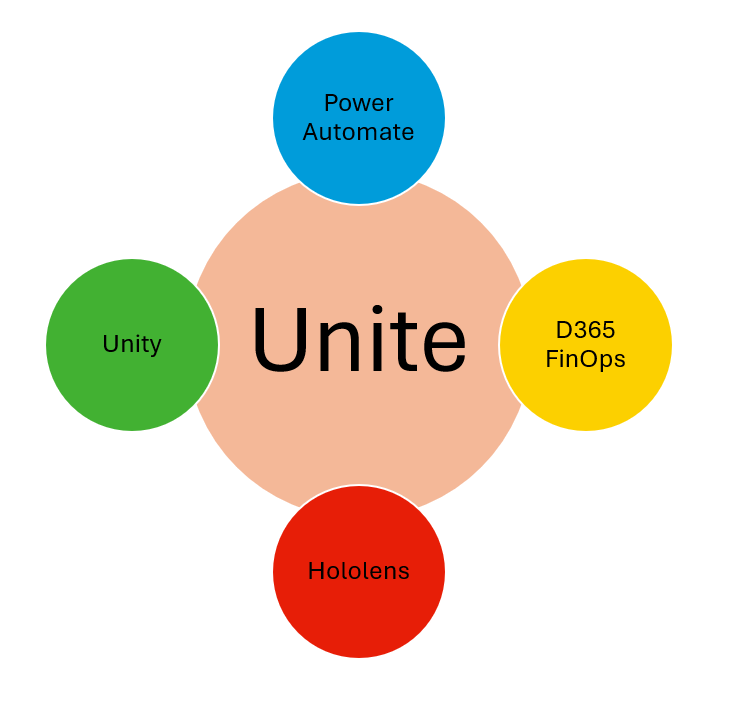

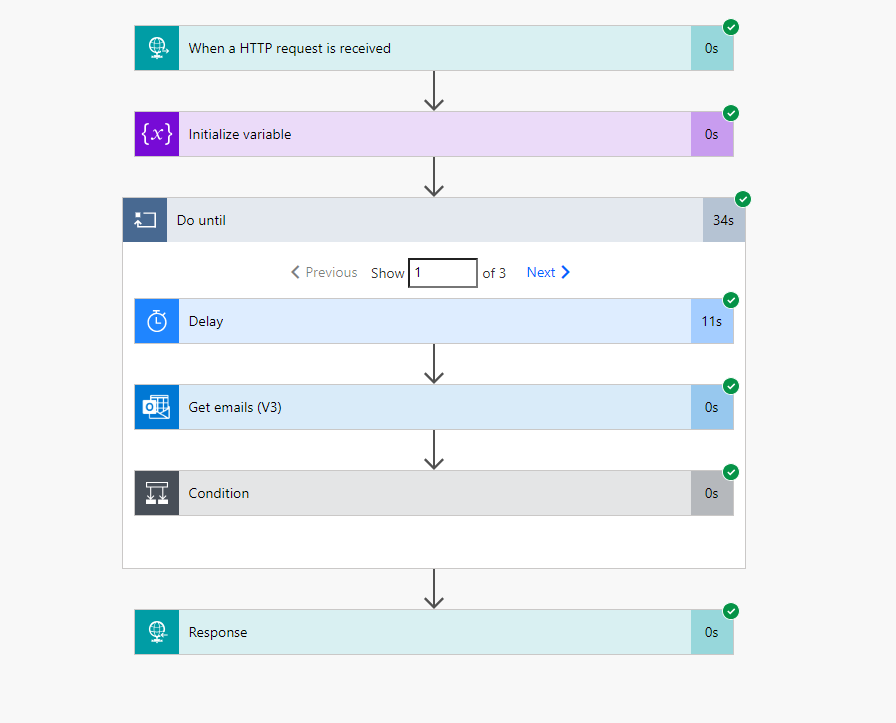

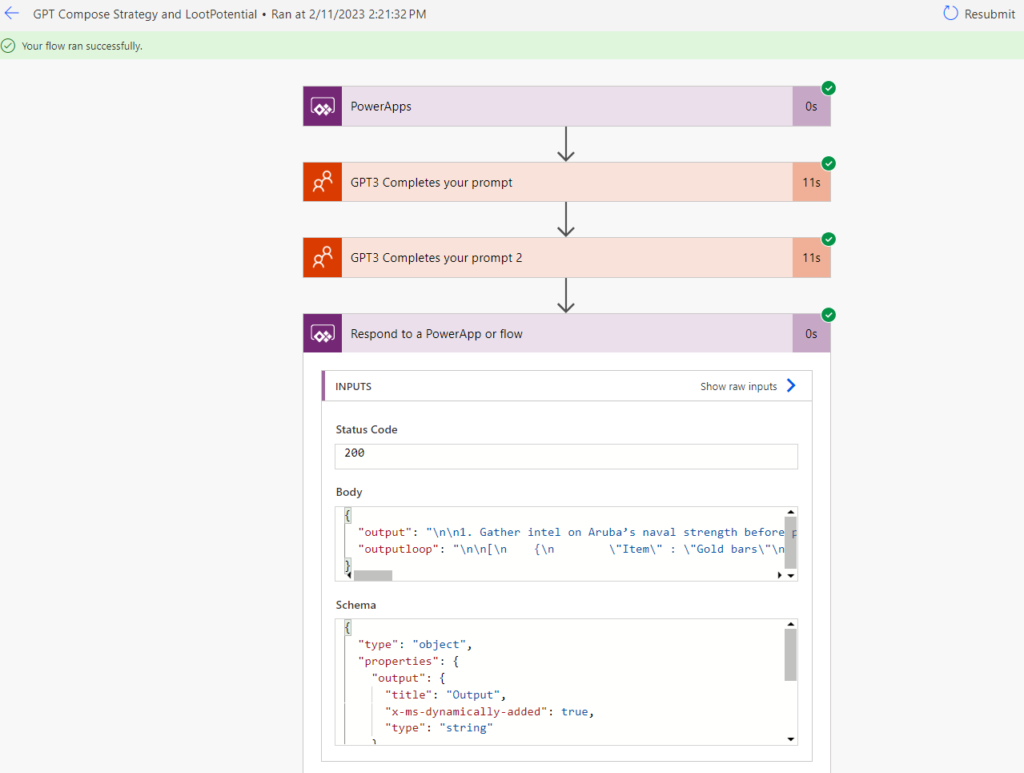

Achieving the “Stairway To Heaven” badge, PromptFlow masterfully combines multiple Microsoft cloud APIs:

- AI Search: Powers the core functionality, enabling efficient data retrieval.

- Sentiment Analysis: Enhances user interaction by adapting responses based on detected sentiment.

- PromptFlow Technology: Our proprietary tech, ensuring smooth and natural conversational experiences.

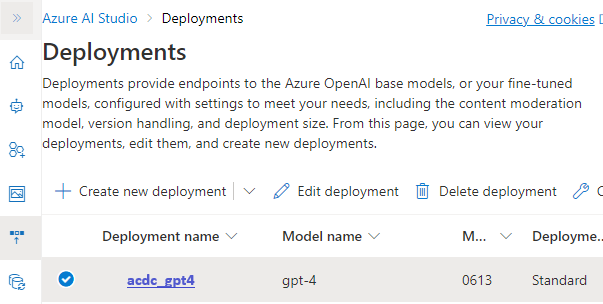

- Azure OpenAI Instance: The backbone providing computational power and advanced LLM capabilities.

This integration not only meets the badge’s criteria but also sets a new standard for AI solutions in cloud environments.

Technical Integration and Future Prospects

Each API plays a crucial role in making PromptFlow a robust, intelligent system. This multifaceted integration exemplifies our commitment to leveraging cloud technology for creating advanced AI solutions, paving the way for future innovations.

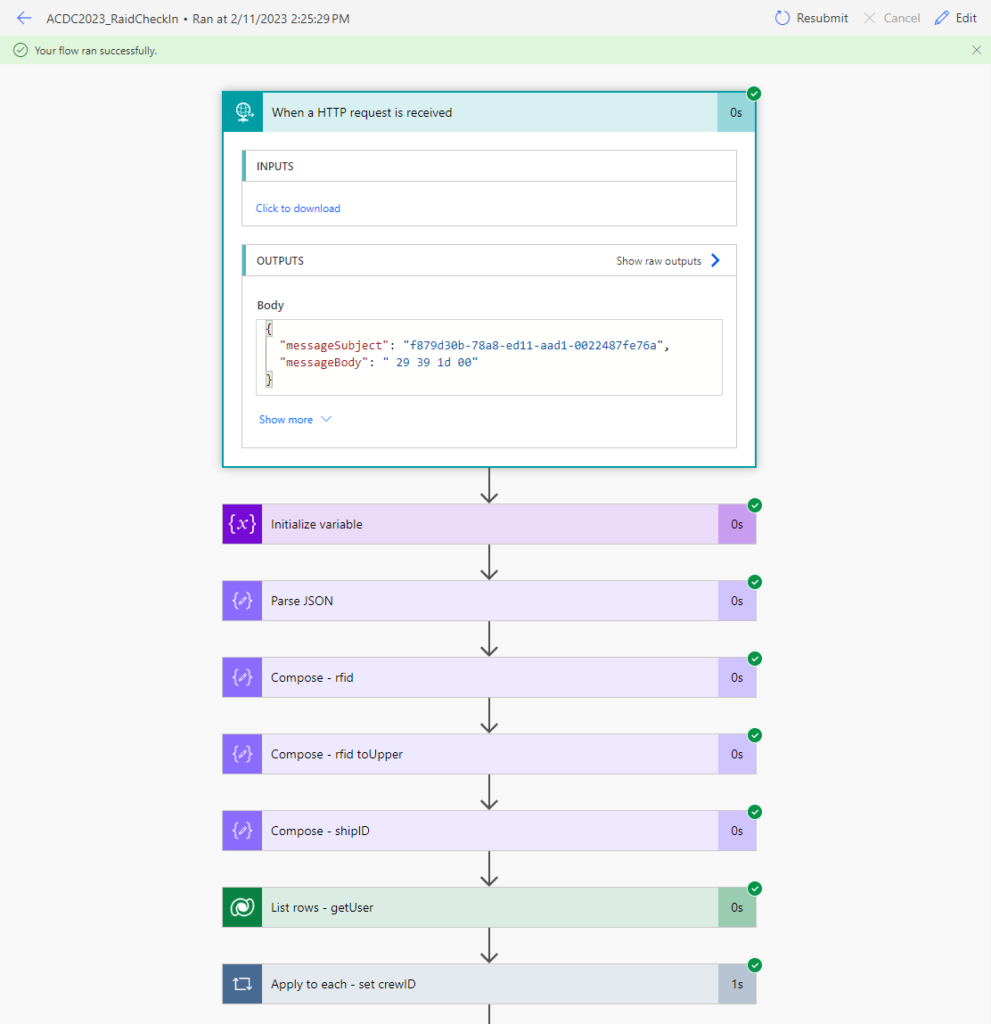

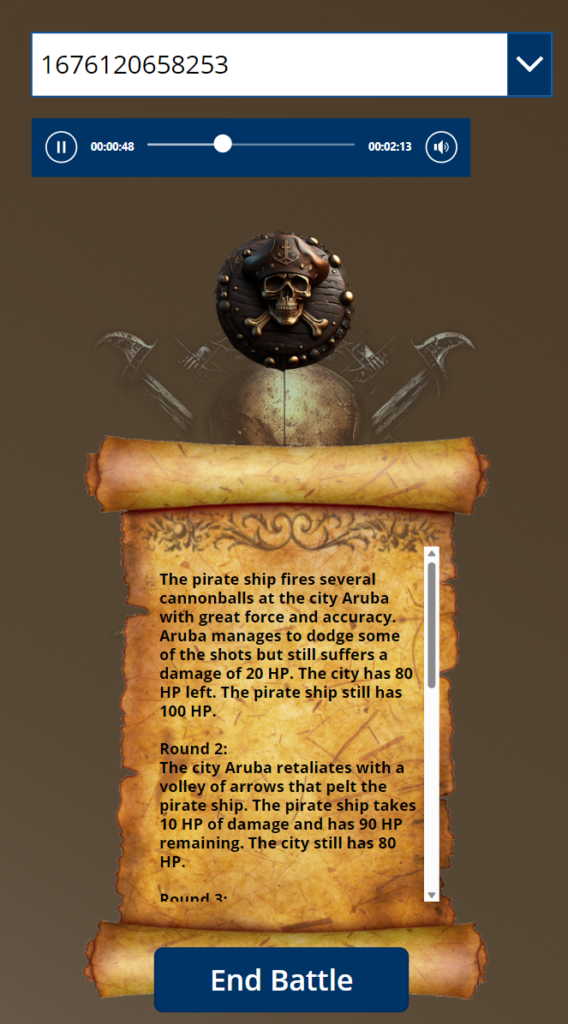

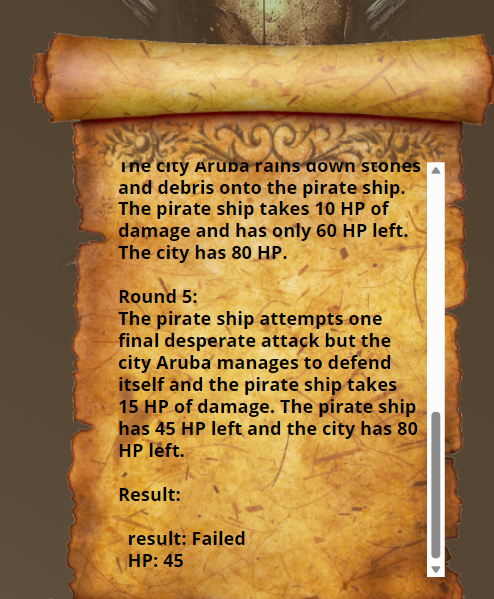

The solution/demo:

Conclusion

PromptFlow is more than just a chatbot; it’s a groundbreaking tool that revolutionizes race strategy development and team collaboration. It showcases our expertise in blending AI with cloud technologies, earning us the “Plug N’ Play,” “Crawler,” and “Stairway To Heaven” badges. As we continue to innovate, we look forward to exploring new horizons in AI and cloud computing.

Note: This combined draft succinctly captures the essence of PromptFlow’s achievements in earning the three badges. It emphasizes the chatbot’s functionalities, technical prowess, and future potential in the realm of AI and cloud computing.